What is a Type II error?

In the context of hypothesis testing, the statistical phrase “type II error” refers to the mistake that happens when one fails to reject a null hypothesis that is truly untrue. A type II mistake, an omission error, results in a false negative. For instance, a disease test may provide a negative result if a patient is infected. This is a kind II mistake because, although it is untrue, we accept the test’s negative result.

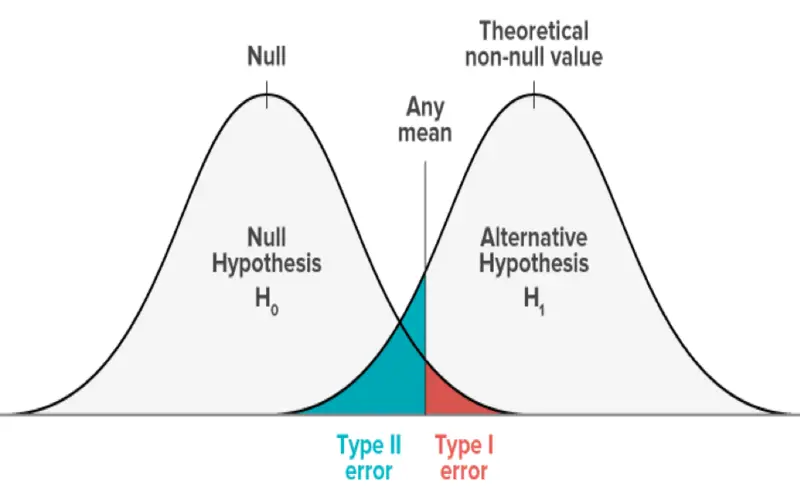

One way to compare a type II mistake with a type I error is that the former involves rejecting a valid null hypothesis. At the same time, the latter refers to an error that happens when a false null hypothesis is not rejected. Even if the mistake does not result from chance, it rejects the alternative theory.

Understanding a Type II Error

A type II mistake, often referred to as a beta error or an error of the second sort, is an error that verifies a concept that ought to have been disregarded. An example of this would be the assertion that two observations are the same even if they are not. Even though the alternative hypothesis is the actual state of nature, a type II mistake does not reject the null hypothesis. Stated differently, an erroneous conclusion is accepted as fact.

A type II mistake may be minimized by establishing more rigorous standards for rejecting a null hypothesis (H0). For example, if an analyst views a result as statistically insignificant if it falls within the 95% confidence interval’s +/- bounds, reducing the tolerance to +/- 90% and narrowing the bounds will result in fewer negative results and lower the likelihood of a false negative.

However, following these procedures usually makes it more likely for a type I error—a false-positive result—to occur. The possibility or danger of making a type I or type II mistake should be considered while doing a hypothesis test.

A type I mistake is more likely to occur when precautions are taken to lessen the likelihood of a type II error.

Errors of Type I vs Type II

A type I mistake rejects the null hypothesis when it is true, which is how it differs from a type II error (i.e., a false positive). The significance level chosen for the hypothesis test equals the likelihood of making a type I mistake. Consequently, a type I mistake has a 5% probability of happening if the significance threshold is set at 0.05.

The likelihood of making a type II mistake may be expressed as one-test power or beta. Expanding the sample size may raise the test’s power while lowering the possibility of a type II error.

The report’s analysis will include type II error risk and the overall significance level of statistical publications. For instance, a 2021 meta-analysis on using exosomes to treat spinal cord injuries found a 0.1 type II error risk and an overall significance level of 0.05.

A Type II Error Example

Let’s say a biotechnology firm wishes to compare the efficacy of two of its medications in treating diabetes. According to the null hypothesis, the two drugs work equally well. The assertion that the business intends to refute using the one-tailed test is known as the null hypothesis, or H0. According to the alternate theory, the two medications don’t work equally well. Rejecting the null hypothesis supports the alternative hypothesis, Ha, which is the natural state.

To compare the therapies, the biotech business conducted an extensive clinical study involving 3,000 diabetic patients. The 3,000 patients are split into two equal groups by the corporation at random, with one group receiving one therapy and the other receiving the other treatment. It chooses a significance threshold of 0.05, meaning it is ready to tolerate a 5% possibility of making a type I mistake or rejecting the null hypothesis when it is true.

The beta is determined to be 2.5%, or 0.025. Consequently, there is a 97.5% chance of making a type II mistake. Rejecting the null hypothesis is necessary if there is a difference between the two drugs. A type II mistake, however, happens if the biotech business does not reject the null hypothesis when the medications are not equally successful.

What distinguishes Type I errors from Type II errors?

If a null hypothesis true for the population is rejected, this is known as a type I mistake. This kind of mistake is an example of a false positive. On the other hand, a type II mistake happens when a null hypothesis that is untrue for the population as a whole is not rejected. This kind of mistake is an example of a false negative.

What leads to Type II mistakes?

A test with insufficient statistical power often results in a type II mistake. The likelihood of avoiding a mistake increases with statistical power. Before testing, it’s usually advised to set the statistical power to at least 80%.

What Elements Affect the Type II Error Risk Magnitude?

The type II error risk should decrease as the study sample size grows. The type II error should decrease proportionately to the genuine population effect size. Finally, the research’s predetermined alpha level affects the degree of risk. There is a greater chance of a type II mistake when the alpha level drops.

How Can an Error of Type II Be Reduced?

A Type II error cannot be avoided entirely. However, increasing the sample size may lower the risk. On the other hand, doing so raises the possibility of making a Type I mistake instead.

The Final Word

A Type II mistake in statistics produces a false negative, which indicates that a discovery exists but was overlooked during the analysis (or that the null hypothesis is not rejected when it should have been). Insufficient power in statistical tests, which often stems from too-small sample sizes, may lead to a Type II mistake. Increasing the sample size may reduce the likelihood of making a Type II mistake. Type I mistakes, or false positives, will be contrasted with Type II errors.

Conclusion

- The risk of mistakenly failing to reject the null hypothesis when it is not relevant to the whole population is known as a type II error.

- A false negative is a type II mistake.

- Stricter criteria for rejecting a null hypothesis may decrease a type II mistake and raise the possibility of a false positive.

- The risk associated with an error depends on the sample size, the actual population size, and the predetermined alpha level.

- Analysts must compare the possibility and consequences of type I and II mistakes.